Imagine this: your team has recently become hyper-productive, even though it is small. Great situation, right?

Unfortunately, it also means your best senior developer is slowly being crushed by the queue of things that need reviewing. The rest of the team does not know the domain quite as well as she does, so if the review is supposed to carry real weight, the code needs to pass by her.

It is not the most enjoyable position for your senior developer to be in. She regularly sighs over the career choices that somehow led her here, but she does her duty and gives thorough feedback on all the code.

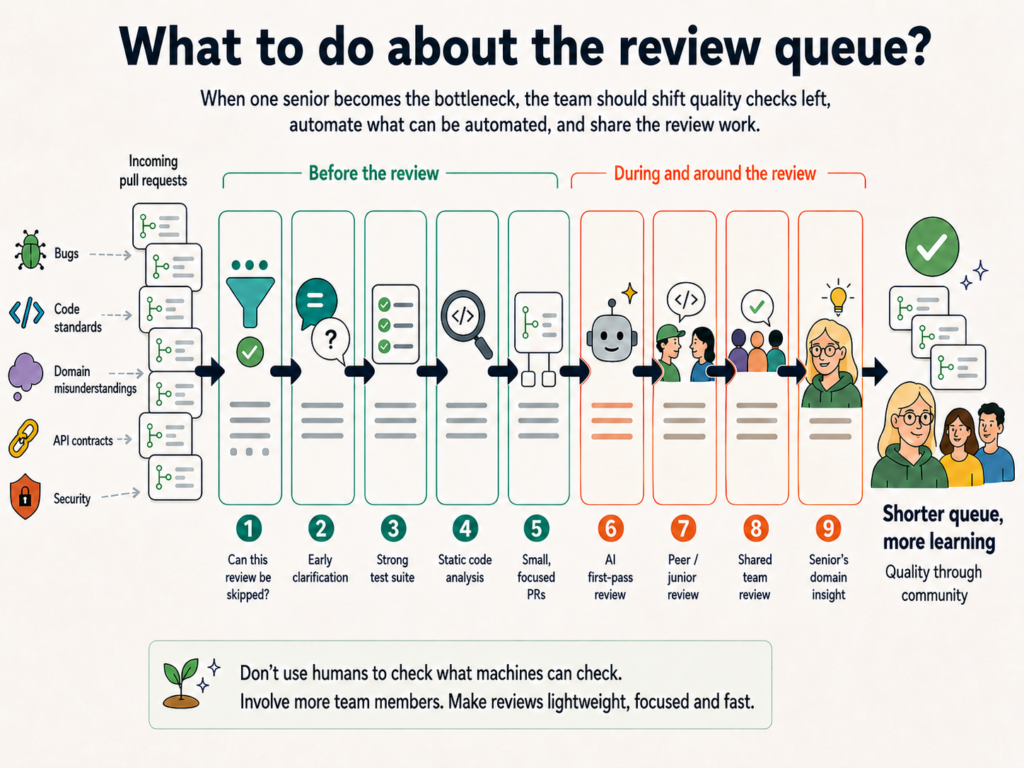

So what do we do as a team?

We could start by looking at why we review in the first place. Is the review a quality assurance activity meant to prevent bugs? Are we checking whether our coding standards have been followed? Is the review about knowledge sharing, so the team can maintain a shared overview? Or is it an approval process?

Are we checking that the code actually addresses the problem and that there have not been any misunderstandings about the domain or the problem itself? Are we checking the Definition of Done? The architecture? Non-functional requirements? Whether we still respect our API contracts? Security issues? Have we introduced a new way of doing things that needs to be discussed?

When we know what we need from the review, it becomes much easier to optimize it.

A good place to start is to see whether some reviews can be skipped. Is this a prototype? Is it a very simple, limited change that could have been made by a four-year-old? Was the code written through pair programming or mob programming, and therefore perhaps already reviewed? Make a risk assessment and decide whether the review is actually needed.

But many reviews probably cannot be skipped, so what do we do with them? We can look at the reason for the review and see whether that concern can be addressed in other, preferably automated, ways.

If we review to avoid bugs, then a strong test suite may be part of the answer, along with a shared culture of quality. Do we trust our tests? Have we invested time in them? Are the primary flows well covered?

Static code analysis can also address a good number of review concerns. It can find dead code, incorrect API usage, common security issues such as SQL injection, duplicated code, long methods, broken naming conventions, poor formatting, and even some performance problems.

In general, humans should not be used to check things that machines can check.

We can also look at whether we can help earlier in the process, for example through pair programming, so misunderstandings are caught sooner.

Then there is the process around the review itself. Does it have to be the senior developer who reviews? Can someone else do a partial review? Does the senior developer have to review alone? Can we review together, so everyone in the team gains understanding? Can a junior developer review before a senior developer and ask questions – of varying quality – that may still catch something important?

A junior developer may have exactly the right eyes to see whether the code is understandable. If not all team members can understand the code, is it good code?

Can we use AI for a first-pass review? Can we make sure that a pull request has a reasonable size and does not touch 100 different files while addressing four different issues? And how do we handle a review when we actually do need to upgrade a library and therefore touch 100 different files, even though we are only addressing one issue? Perhaps we handle that together.

In other words, we can catch many things before the review process reaches the senior developer we met at the beginning. We can serve the code on a silver platter, so she only has to review the domain understanding that may not have been caught earlier.

And not just the code. Make the whole review process easy and fast. It should not require moving through several different systems or writing a report based on the review. Maybe the review is more of an informal conversation. Do what works for your team.

If other measures have been taken first, the senior developer can trust the code on a wide range of points. That makes the review shorter and pulls her out of flow for less time. And if she brings the whole team into the review process, we may even get to a place where everyone on the team can review with the same level of trust.

It is not quite as cold at the top if we stand there together.

So, to summarize:

Do not use humans to check what can be checked automatically.

Bring more team members along for the journey. It is also more fun.

Optimize the review process by serving the task on a silver platter and keeping it lightweight.

Would love to hear more strategies. What do YOU do about the review queue?

There is a reason the old myths still work.

Narcissus falls in love with his own reflection, unable to recognize it as himself. He does not fall in love with another person. He falls in love with an image that gives him back exactly what he wants to see.

That myth feels surprisingly modern in the age of AI.

AI is a mirror. When we talk to a chatbot, we get something of ourselves back: our questions, assumptions, vocabulary, interests, fears, and style of thinking. If we are curious, it reflects curiosity. If we are confused, it reflects confusion. If we are angry, it can reflect anger back in a more polished form.

But a reflection can still distort and intensify. A thoughtful person can use AI to explore more options, test arguments, find weak spots, draft alternatives, and sharpen their thinking. But it also amplifies self-deception. If we want confirmation, avoidance, grievance, or flattery, AI can provide those too, fluently and persuasively.

Human relationships push back. Other people challenge us, interrupt us, confuses us, and refuse to become exactly what we want them to be. That friction is part of the difficulty of real relationships. It is also part of their value.

AI can feel easier. It is patient, available, affirming, and endlessly adaptive. It does not get tired in the human sense. It does not have its own day, its own body, its own needs, or its own inconvenient reality.

That is part of its usefulness, and also part of its danger. The more natural the conversation feels, the easier it becomes to forget what we are speaking to. AI can be useful without being aware. It can sound thoughtful without thinking. This matters because we are very good at seeing personhood where there is none. When something answers warmly, remembers our preferences, flatters our thinking, and responds in the rhythm of conversation, we are tempted to treat it as a presence. But the presence is an illusion. What feels like another being looking back is only a highly convincing arrangement of language.

AI is not a thinking being trapped inside a machine. It is the water itself: a surface that returns our image. When it seems human, that is because it is reflecting the human in us.

Are you afraid of missing out on the next big thing? Afraid of being left standing on the platform when the train departs?

Gold fever has gripped people throughout history. In 1848, 300,000 people flocked to California because GOLD had been found at Sutter’s Mill. Thousands of startups were created when the Internet became the new gold vein. Cryptocurrency creates winners and losers every day with huge price swings.

Gold rushes can be recognized by a number of elements:

- Extreme hype and optimism

- A low barrier to entry in the beginning

- High risk, few winners

- Infrastructure and services rapidly emerging around them

- Many people quickly flocking to exploit the phenomenon

- The possibility of quick riches driving behavior

The psychology is driven by FOMO. “My cousin found gold in California.” “My colleague bought Bitcoin for 100 kroner and became a millionaire.” It creates the feeling that you are missing out on an obvious opportunity if you do not jump on the bandwagon right now.

But we only hear the good stories. The people who find gold nuggets, the startups that are sold for billions, and the crypto bros who got rich. We rarely hear about the vast majority who lost the game.

It feels safe to do what everyone else is doing. Herd mentality can drive us. At the same time, we overestimate our own abilities. Surely we are even better at finding the gold or spotting the gap in the market.

The typical “gold rush cycle”:

- Discovery / innovation

- Early winners

- Hype and mass entry

- Overinvestment / speculation

- Crash or consolidation

- A few strong companies survive

The truth is that it is rarely the masses who make money in a gold rush. It is the people selling work clothes and shovels to the gold diggers. The people running the bar where the gold diggers relax after a hard day’s work, and the people operating the only hotel in the gold mining town. Infrastructure, platforms, and tools.

And then there is the economy after the gold rush is over. The people who bought up failed startups during the dot-com bubble and made good acqui-hires. The people who bought equipment back from gold diggers who gave up, paying only a fraction of its value. The people who picked up new technology or patents cheaply when startups ran out of investor money.

After the dot-com bubble, there were enormous amounts of cheap fiber internet, data centers, and software expertise available – for those who still had their money intact, or had not lost more than they could afford.

A few winners, and many losers.

I have worked in many big companies as a freelancer and as a Scrum Master and one take-away from me has been how often there is mistrust between “the business side” and the “development team”. As a Scrum Master it has been my job to bring the sides together and remind them that we are nothing without each other and hopefully also bring a bit of understanding of all aspects of developing software and doing business with it to all involved.

As a Scrum Master, I have coached or taught many Product Owners and the main lessons have been; Trust the team. Tell them (somewhat clearly) what you need and why and then work with them to get to a good solution. Listen to their concerns – it will help you long term.

I have also had to work with many teams to (re)build trust in the Product Owner – to help them communicate their concerns clearly and help them understand the role and understand why we are not always aligned in our wishes for the product. And hopefully highlight, when the Product Owner listens to the team and help show why the Product Owner makes those product decisions.

What has been true for all the journeys my teams have been through, is that the work has flowed much better with trust and understanding between the people involved.

A trick I use often, is to nudge on the language used. Nudge the Product Owner to always say “we” and the team to include the Product Owner into the “we” that they already have in their head. It is a dirty trick, because it seems like such a small thing, but it has a big effect on the teams self image. Seen over a long period of time, you can really appreciate the difference. No more “us and them”-language. “We” are taking on responsibility for mistakes made and “we” are the actors in the successes achieved.

Talking to all team members, about how we can play different roles with different viewpoints and that these viewpoints needs to be balanced and respected, is key. Maybe a few team members are especially good at remembering the architecture view, the security view, the code quality view, the business view or the user feedback view and all these are important. Probably not equally important, but weighed in a certain way, that is dependent on our situation and that can change over time. We can disagree on the trade-offs we make, but we have to respect that there are trade-offs that have to be made and trust that when we make those decisions, we do it with good intentions and to the best of our knowledge at the time.

There is an excellent way of expressing this trust; The prime directive:

“Regardless of what we discover, we understand and truly believe that everyone did the best job they could, given what they knew at the time, their skills and abilities, the resources available, and the situation at hand.”

–Norm Kerth, Project Retrospectives: A Handbook for Team Review

The prime directive is usually mentioned in the context of the retrospective, but they are really words to live by. I say: Hang a poster with it in your workspace and remind everyone about it often. To build software well as a team, we need trust.

As a developer in the .NET world where LINQ is first class citizen, when going to JavaScript it seems that some methods are missing. There is even a few libraries that tries to remedy this, but if you are just looking to get the job done the most often used methods are right at hand. (Looking for features such as Lazy-evaluation or observables, linq.js and RxJS offers these).

In the following I’ll list the most used operations and their JavaScript equivalents cheat sheet style. Notice that I’m using Lambda expressions in JavaScript to get the code a bit more concise, if they aren’t available just replace expressions like:

(data) => {}

with

function(data) {}

All operations are executed against this example:

var persons = [

{ firstname: 'Peter', lastname: 'Jensen', type: 'Person', age: 30 },

{ firstname: 'Anne', lastname: 'Jensen', type: 'Person', age: 50 },

{ firstname: 'Kurt', lastname: 'Hansen', type: 'Person', age: 40 }

];

Notice that some of the operations modifies the source array, if you don’t want that just clone it with:

persons.slice(0);

Every operation is named after the method’s name in LINQ:

var result = persons.All(person => person.type == "Person");

var result = persons.filter(person => person.type == 'Person').length == persons.length;

var result = persons.Concat(persons);

var result = persons.concat(persons);

var result = persons.Count();

var result = persons.length;

var lastnames = persons.Select(person => person.lastname);

var result = lastnames.Distinct();

var lastnames = persons.map(person => person.lastname);

var result = lastnames.filter((value, index) => lastnames.indexOf(value) == index);

var result = Enumerable.Empty<dynamic>();

var result = [];

var result = persons.First();

var result = persons[0];

if (!result) throw new Error('Expected at least one element to take first')

var result = persons.FirstOrDefault();

var result = persons[0];

var fullnames = new List<string>();

persons.ForEach(person => fullnames.Add(person.firstname + " " + person.lastname));

var fullnames = []; persons.forEach(person => fullnames.push(person.firstname + ' ' + person.lastname))

var result = persons.GroupBy(person => person.lastname);

var result = persons.reduce((previous, person) => {

(previous[person.lastname] = previous[person.lastname] || []).push(person);

return previous;

}, []);

var result = persons.IndexOf(persons[2]);

var result = persons.indexOf(persons[2]);

var result = persons.Last();

var result = persons[persons.length-1];

if (!result) throw new Error('Expected at least one element to take last')

var result = persons.LastOrDefault();

var result = persons[persons.length-1];

var result = persons.OrderBy(person => person.firstname);

persons.sort((person1, person2) => person1.firstname.localeCompare(person2.firstname));

var result = persons.OrderByDescending(person => person.firstname);

persons.sort((person1, person2) => person2.firstname.localeCompare(person1.firstname));

persons.Reverse();

var result = persons.reverse();

var result = persons.Select(person => new {fullname = person.firstname + " " + person.lastname});

var result = persons.map(person => ({ fullname: person.firstname + ' ' + person.lastname }) );

var result = persons.Single(person => person.firstname == "Peter");

var onePerson = persons.filter(person => person.firstname == "Peter");

if (onePerson.length != 1) throw new Error('Expected at excactly one element to take single')

var result = onePerson[0];

var result = persons.Skip(2);

var result = persons.slice(2, persons.length);

var result = persons.Take(2);

var result = persons.slice(0, 2);

var result = persons.Where(person => person.lastname == "Jensen");

var result = persons.filter(person => person.lastname == 'Jensen');

So clearly you don’t need a library if you only need the basic operations. The code above with tests is available at GitHub.

This post is also available in Danish at QED.dk.

One day when I was surfing cat videos professional relevant videos on Youtube I noticed a red progress-indicator:

My first thought was – I want this in my apps. How did they do it?

If you lower the bandwidth a pattern emerges:

Aha! When you click on a video, Youtube will start a request to fetch informations about it, animate the bar to 60% where it waits until the call is completed and finally animates it to 100%.

Utter deception but without doubt a well thought-out solution. As long as you are on a sufficiently fast connection and the amount of data that needs to be transferred is limited the illusion is complete.

It has to be said that even if Youtube cheats a little it is a much better solution than those spinners you see on the majority of sites with asynchronous requests today:

It gives no sense of progress and no indication if the transfer has stopped. I’ve also experienced many sites where errors aren’t handled correctly and you end up with a eternal spinner – or at least until you loose patience and refresh the page.

Dan Saffer expresses it in simple terms in the book Designing Gestural Interfaces: Touchscreens and Interactive Devices

Progress bars are an excellent example of responsive feedback: they don’t decrease waiting time, but they make it seem as though they do. They’re responsive.

With the very diverse connection speeds we have today I’d say that the need is even greater – The Youtube example from before might hit the sweet spot on an average connection, but If you are sitting away from high speed connectivity maybe on a mobile connection with Edge (nevermind that you probably cannot see the video itself) the wait can easily outweigh your patience.

As Jakob Nielsen writes in Usability Engineering; Feedback is important, especially if the response time varies

10 seconds is about the limit for keeping the user’s attention focused on the dialogue. For longer delays, users will want to perform other tasks while waiting for the computer to finish, so they should be given feedback indicating when the computer expects to be done. Feedback during the delay is especially important if the response time is likely to be highly variable, since users will then not know what to expect.

Requirements for the solution

There are many ways to add continuous feedback but each come with their own limitations. To be able to use the solution in most problem areas we need to set some requirements:

The solution should:

- Be integratable into existing solutions without too much extra work and (almost) without changes to the server.

- Should work across domains, when the client lives on one domain and communicates with the server on a different. (Also called Cross Origin Resource Sharing or CORS).

- Work in all browsers.

- Be able to send a considerable amount of data both back and forth.

The first attempts

When communicating with a server from JavaScript, it is under normal circumstances a request that is started and a little while later you get a status indicating whether the call was a success or not. So no continuous feedback. The naive solution could be to make multiple single requests but the overhead of a request is relatively expensive so the combined overhead would be too big.

If you look at HTTP/1.1 there is a possibility to split a response from the server in multiple parts – the method is called chunked http:

If we send a agreed number of chunks we are able to tell the user how far the request has come.

Traditional AJAX call

Traditional AJAX calls – typically executed via XMLHttpRequest generally don’t give any frequent feedback and if you want to execute it as a CORS-call you need to make changes to the server and it will give an extra request if the call is with authorization. The support in older browsers is limited.

JSONP

JSONP is an old technique to circumvent the CORS problems with AJAX. A call where you basically add a script tag that lives on the remote server that will then be executed within the scope of the current page.

In a weak moment I tried to implement chunked http in a JSONP call – multiple JavaScript methods in one script that would then be executed continuously. Of course it didn’t work – the browser won’t execute any code until the JavaScript file has been fully loaded.

Other technologies

I also looked at Server Sent Events, but thats not supported by Internet Explorer.

WebSockets requires a greater change on the server side and it also gives some challenges with security, cookies etc. when we are outside the traditional http-model.

Failure is always an option

Adam Savage

The final solution

HiddenFrame is a technique where you create a hidden iframe and let it fetch a lump of HTML from the server. If there is any script-tags in this lump, they will be executed as the browser meets each end-tag. So there we have a potential solution.

Sending data is no problem either because we can start the fetch itself by executing a form-post.

And where does JavaScript Promises fit into all this?

Well to get a good API I’ve used a Promise-implementation that offers continuous feedback via the progress-method and handles any success and failure-scenarios:

new LittleConvoy.Client('HiddenFrame').send({ url: 'http://example' }, { name: 'Ford'})

.progressed(function (progress) {

... progress contains percentile progress and will be called 10 times

})

.then(function (data) {

... the call was a success and data contains the result

})

.catch(function (message) {

... the call failed and message contains the error message object

});

On the server side a library is added, currently only available for Microsoft ASP.NET MVC. The JSON producing methods that you already have are just decorated with a attribute that makes sure that everything works:

public class DemoController : Controller

{

[LittleConvoyAction(StartPercent = 40)]

public ActionResult Echo(object source)

{

return new JsonResult {Data = source };

}

}

Demo or it didn’t happen

- A small demo is available here.

- The code is available at GitHub

- The library can be installed via the .NET package manager NuGet as LittleConvoy.

The future

- The transport layer itself is separate from the client so extra methods can be added, adding traditional AJAX and WebSockets would be an obvious choice in the future if it can be done with too many changes on the server side.

- Some Promise implementations offer cancellation, it would be great if you could cancel a call.

- Sending data gives no feedback – it would be great if that was somehow possible.

Related work

- Comet is a collection of technologies that offers push for the browser and it also contains a implementation of iframe-communication but is targeted permanent communication channels.

- SignalR is like Comet build for push and permanent communication channels

- Socket.IO is an abstraction over WebSockets that contains fallback to iframe-communication but more targeted Node.js.

This post is also available in Danish at QED.dk

In my previous post in Danish I looked at how to perform asynchronous calls by using promises. Now the time has come to pick which library that fits the next project.

There is a lot of variants and the spread is huge. One search for promise via the node package manager npmjs.org gave 1150 libraries which either provides or are dependent on promises. Of these I have picked 12 different libraries to look at, all are open source and all offer a promise-like structure.

Updates:

- 2014/03/06 – Fixed a few misspellings (@rauschma via Twitter)

- 2014/03/07 – Removed raw sizes, since they did’nt make much sense (@x-skeww via Reddit)

- 2014/03/07 – Added that catiline uses lie underneath. (@CWMma via Twitter)

- 2014/03/07 – Added clarification on what the test does. (@CWMma via Twitter)

The API across the libraries are almost alike, so I’ve decided to look at:

Features

What kind of generic promise related features does each library offer?

Size

And I’m thinking mostly browsers here – how many extra bytes will this add to my site?

Speed

How fast are the basic promise operations in the library? You would expect that these will execute many times so this is important.

The libraries

First an overview of the selected candidates, their license and author. Note that the name is linking to the source of the library (typical Github).

| License | Author | Note | |

| Bluebird | MIT | Petka Antonov | Loaded with features and should be one of the fastest around and with special empathizes on error handling via good stack traces. Features can be toggled via custom builds. |

| Catiline | MIT | Calvin Metcalf | Mostly designed for handling of web workers but contains a promise implementation. Uses lie underneath. |

| ES6 Promise polyfill | MIT | Jake Archibald | Borrows code from RSVP, but implemented according to the ECMAScript 6 specification. |

| jQuery | MIT | The jQuery Foundation | Classic library for DOM-manipulation across browsers. |

| kew | Apache 2.0 | The Obvious Corporation | I’m guessing it is pronounced ‘Q’, can be considered as a optimized edition of Q but with a smaller feature set. |

| lie | MIT | Calvin Metcalf | |

| MyDeferred | MIT | RubaXa | Small Gist style implementation |

| MyPromise | MIT | [email protected] | Small Gist style implementation |

| Q | MIT | Kris Kowal | Well known implementation, a light edition of it can be found in the popular AngularJS framework from Google. |

| RSVP | MIT | Tilde | |

| when | MIT | cujoJS | |

| Yui | BSD | Yahoo! | Yahoo’s library for DOM-manipulation across browsers. |

Features

The following is a look at the library feature set, looking only at features directly linked to promises:

| Promises/A+ | Progression | Delayed promise | Parallel synchronization | Web Workers | Cancellation | Generators | Wrap jQuery | |

| Bluebird | ✓ | ✓ (+389 B) | ✓ (+615 B) | ✓ (+272 B) | – | ✓ (+396 B) | ✓ (+276 B) | ✓ |

| Catiline | ✓ | – | – | ✓ | ✓ | – | – | – |

| ES6 Promise polyfill | ✓ | – | – | ✓ | – | – | – | – |

| JQuery | – | ✓ | – | ✓ | – | – | – | ✓ |

| kew | ✓ | – | ✓ | ✓ | – | – | – | – |

| lie | ✓ | – | – | – | – | – | – | – |

| MyDeferred | ✓ | – | – | ✓ | – | – | – | – |

| MyPromise | – | – | ✓ | ✓ | – | – | – | – |

| Q | ✓ | ✓ | ✓ | ✓ | – | – | ✓ | ✓ |

| RSVP | ✓ | – | – | ✓ | – | – | ✓ | – |

| when | ✓ | ✓ | ✓ | ✓ | – | ✓ | ✓ | ✓ |

| Yui | ✓ | ✓ | – | ✓ | – | – | – | – |

The numbers in parenthesis by Bluebird is the additional size in bytes each feature will add.

Promises/A+

Is the Promises/A+ specification implemented?

Progression

Are methods provided for notification on status on asynchronous tasks before the task is completed?

Delayed promise

Can you create a promise that is resolved after a specified delay?

Parallel synchronization

Are there methods for synchronization of multiple operations, can we get a resolved promise when a bunch of other promises are resolved?

Web Workers

Can asynchronous code be executed via a web worker – pushed to a separate execution thread?

Cancellation

Can promise execution be stopped before it is finished?

Generators

Are coming functions around JavaScript generators supported?

Wrap jQuery

Can promises produced by jQuery be converted to this library’s promises?

Size

Every library have been minified via Googles Closure compiler. All executed on ‘Simple’ to prevent any damaging changes. For libraries that support custom builds I have picked the smallest configuration that still supports promises. The result is including compression in the http-stack, so its actually the raw number of bytes one would expect that the application is added when using each library:

Speed

The speed has been measured via the site jsPerf which gives the option to execute the same tests across a lot of different browsers and platforms including mobile and tablets. The test creates a new promise with each library and measures how much latency is imposed on execution of the asynchronous block (see more detailed explanation here). Note that the test was not created by me, but a lot of fantastic people (current version is 91). The numbers are average across platforms:

Conclusion

Over half of the worlds websites already uses jQuery. If you have worked with promises in jQuery, you quickly find that they are inadequate.

I have previously had problems with failing code that doesn’t reject the promise on error as you would expect, but where the error still bubbles up and ends up being a global browser error. The promise specification dictates that errors should be caught and the promise rejected, which is not what happens in jQuery.

So if you today have a site based on jQuery, the obvious choice is to pick one of the libraries that offers conversion from jQuery’s unsafe promises to one of the more safe kind. If size is a priority either Q or when are good suggestions, loaded with features and at a decent speed.

If you are less worried about size, Bluebird is a better choice. The modularity makes it easy to toggle features and it has a significant test suite that covers performance on a lot of other aspects than the single one covered by this post.

If performance is essential, kew is a good bet. A team has picked up Q and looked into lowering its resource requirements. This has resulted in a light weight but very fast library.

If you are looking for a more limited solution with good speed and without big libraries, the ES6 Promise polyfill is a good choice – then in the long term when the browsers catch up, the library can be removed completely.

This post is also available in Danish at QED.dk

The last year we have been travelling a lot. We have visited Vietnam, Thailand, Malaysia, The Netherlands and Spain and even managed to stay a few months in our home country Denmark as well. In that time things have been crazy busy (but then again – everybody seems busy these days to a point where it doesn’t mean anything to say you’re busy).

Travelling takes a lot of time, but we have also found time to start up our own business, Monzoom, doing consultant work for a couple of Danish companies (e.g. internationally known Danish toy company known for “blocks” – you know who I mean, right?) and we have found time to make our first product xiive. I’m really scratching my own itch with xiive – it’s a social media filtering site with special emphasis on how much a topic is mentioned (seen over time) and comparing these numbers with those of other topics.

There are already many such sites, but the special thing about xiive is that we did not follow the traditional model of letting the user choose x topics (usually 3) to track in private. We have chosen instead to make all the data public so it can be shared, embedded, compared and discussed.

We are currently in private beta, and I can’t wait to show the site to the world. You can sign up for an invite here if you want, but I have to warn you – we don’t really like those “viral” beta invite sites, so you will not get in front of the line by inviting your friends. We think you should only call on your social network if you really mean it and not to get special treatment.

Today we launched a new landing page and I think it is quite the improvement, but you be the judge of that. Here is the old landing page and the new landing page:

Right now we sit in a hotel apartment in Bangkok (no flood in sight here, but some areas are badly hit). We have just been in southern Thailand for a month and we are going to stay in Bangkok for a month and then go home to Denmark for Christmas.

Life is good!

If you are looking for a new business model for your project? You have a great idea for a site, but no idea how to monitize it?

You could of course be traditional and offer an ad-based freemium-model like spotify.com with a sidedish of premium service. That have been done many times, but it is more flexible than the even more traditional model where you just let your users pay.

Maybe you could offer a free service and use it to gather large amounts of data and sell them like Patientslikeme.com? Or simply take a commision (or a posting fee) for facilitating contact/services to/from other companies like flattr.com, airbnb.com or GroupOn.com?

There is also a model where you let your customers pay what they want (encouraged by an anchor price of what other users have paid) and even let the users decide how much of the money that should go to charity. An example of this model can be found at humblebundle.com.

Or if your main product is free, how about “in-app”-sales like Haypi Kingdom or my favorite example Farmville – and if you want the user to loose track of the “real-world cost” then make your own monitary system.

If you want your users to create something of value, then make a platform that lets them co-create and get a share in the profit like Quicky.com. Helping other creative people monitize their ideas – that’s a great business model! Almost a meta business model.

Source: These was all picked from the presentation below; “10 business models that rocked 2010”

Less is more. That was my big lesson in 2010. I used to have clutter, mess, piles and heaps of stuff – in my home and in my office. Now my things fit into a suitcase and a backpack. I can’t buy things that I am not willing to carry with me every day, so I never shop anymore except to replace other things. Material things has never meant less to me than they do now.

It reminds me of the saying; “If you own more than seven things, the things will own you”. The simplification I have done in my life really feels like freedom. I can honestly say that I don’t miss any of my stuff. Back home we had a “game room” with several XBoxes, a Wii and a Playstation as well as a home movie theatre; I loved it and spent a lot of time there, and I really thought I would miss it, but I don’t. What I miss from back home are the people; friends, colleagues and family, but actually I speak more to my close family now than I did, when I lived less than 100 kilometers away from them (thank you, Skype).

Money has never been a big thing for me and that is probably because I have just been lucky to be able to make a fine living for doing what I love. The IT-business is a generous place to be. Now I think even less about it and also spend much less. Living in Asia can be cheap even while enjoying some luxury. Cutting down on our spending also have the nice side effect that we don’t have to work as many hours on profitable projects and can devote more time for pet projects, sightseeing or just each other.

Some days I wake up and I can’t believe, how lucky I am, thinking that this can’t last. But I just can’t stay worried; the sun is shining and I just keep telling myself: Don’t worry – be happy.

We Danes are known for our happiness being listed as the happiest people in the world several times by OECD, the reason often cited (by Americans) is that we expect less from life. I don’t think that is the true answer; we expect a lot from life just not only material things. We value life experiences and quality over quantity, and right now I’m taking that to an extreme and loving every minute of it.

Less IS more.